LLM driven automation can detect "epistemological deficiencies"—basically, it checks if the logic of a paper holds up, not just the grammar.

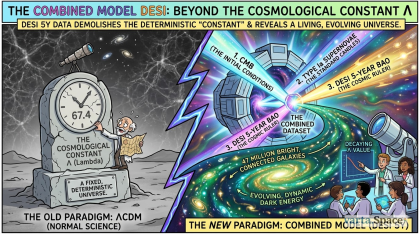

The Crisis of the "Broken Skeleton"

Modern scientific publishing is facing an "Epistemological Crisis." We have more papers than ever, but less trust in their foundational logic. Human peer reviewers are exhausted, often focusing on "surface-level" errors while missing deep-seated logical fallacies.

The current paradigm is Post-Hoc Correction (fixing things after they are written). The new paradigm we propose is the Epistemological Sieve-a pre-publication audit that tests the "logic-skeleton" of a manuscript before it ever touches a human hand.

1. Defining the Sieve: Beyond Grammar and Fact-CheckingMost people mistake AI reviewers for advanced spell-checkers. However, an Epistemological Sieve operates on three distinct levels:

Grammatical Integrity: The "Skin." Is the language clear and professional?

Subject-Matter Alignment: The "Muscle." Does the terminology match the established consensus of the field (e.g., Mangrove Ecology)?

Epistemic Auditing: The "Skeleton." This is the core. It asks: Is the inferential bridge between your data and your conclusion structurally sound? Example: If a paper provides data on Avicennia marina growth in Kerala but concludes that all global mangroves are resilient to sea-level rise, the Sieve flags an over-generalization fallacy. This isn't a grammar error; it’s a failure of logic.2. The "Validation of Intent" Framework

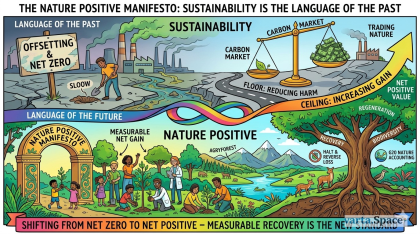

On varta.space, we advocate for Validation of Intent. The Autonomous Reviewer serves this by filtering out "Noise Science": papers designed to look like research (predatory publishing) but lacking the "Verification of Source."

By using the Sieve, an editor at a journal like Agryforest can decide:

Direct Pass: The manuscript's logic is flawless; proceed to human Double-Blind Peer Review.

Autonomous Recalibration: The AI finds "epistemic gaps." The author is invited to fix the logic first.

Hard Reject: The "Skeleton" is non-existent; the intent is not scientific discovery but data-padding.

3. Why This Rebuilds TrustTrust is lost when "weak science" makes it into the public record. When the public sees a study retracted because its logic was flawed, they lose faith in the entire scientific method.

The Sieve provides a Double Standard (The "Dual-Audit"):

Standard 1 (Machine): Ensures total logical consistency and lack of bias in formatting.

Standard 2 (Human): Ensures the research is actually valuable and novel for the community.

4. Conclusion: A New Scientific MethodWe are moving away from the "Author vs. Reviewer" adversarial model. The Autonomous Reviewer becomes a "Cognitive Partner" to the Editor. It allows human experts to stop being "fact-checkers" and start being "visionaries."

The Epistemological Sieve: How Autonomous Reviewers Can Rebuild Scientific Trust

© 2026 varta.space. All rights reserved.

Comments

Add a comment